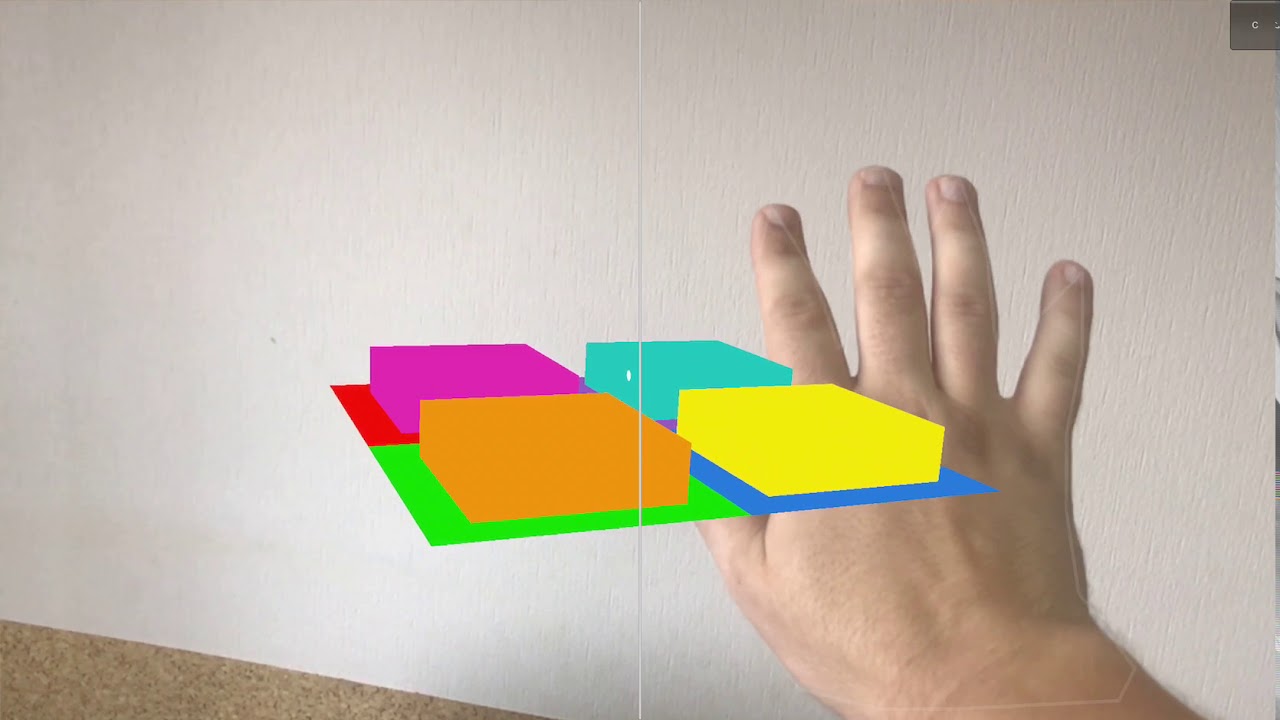

2020CV mobile hand tracking + Apple's ARKit YouTube

Hand tracking. Use the person's hand and finger positions as input for custom gestures and interactivity. Scene reconstruction. Build a mesh of the person's physical surroundings and incorporate it into your immersive spaces to support interactions. Image tracking.

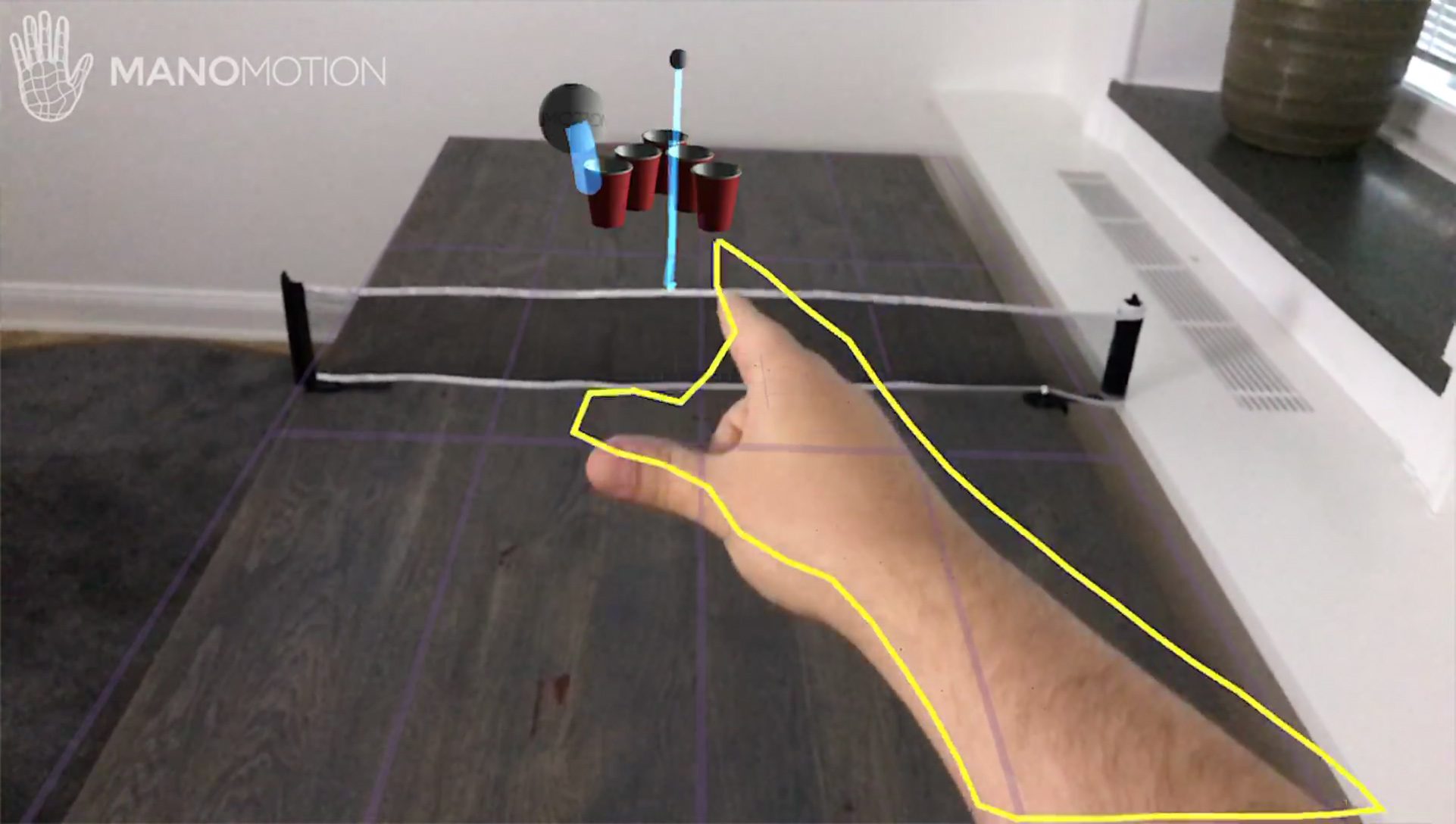

ManoMotion Brings Hand Gesture Input to Apple's ARKit Road to VR

Hand Pose 🙌. Hand tracking has implemented in many AR mockups, and also demoed for real within ARKit before; but these demos have been using private APIs that companies and people have made themselves. Here are some examples: 2020CV Implementing basic hand tracking back in 2017; Augmented Apple Card with Hand Tracking (Concept)

Body Tracking with ARKit on iOS (iPhone/iPad) Vangos Pterneas

Motion Capture Capture the motion of a person in real time with a single camera. By understanding body position and movement as a series of joints and bones, you can use motion and poses as an input to the AR experience — placing people at the center of AR.

ARKit / Manomotion Test Hand input YouTube

ARKit from Apple is a really powerful tool that uses to analyze your environment and detect features from it. The power lies in the fact that you can use the detected features from the video to anchor virtual objects in the world and give the illusion they are real.

The new handtracking AI by Google could be the new revolutionary product for speech impaired people

Neither the ARKit nor ARCore SDKs offer hand tracking support, though. Both companies expect you to use the touchscreen on your device to interact with the digital assets in your real-world.

Finger Tracking MIREVI

Replies. In order to interact with 3D objects, the hand would likely need to be represented in 3D. However, Vision's hand pose estimation provides landmarks in 2D only. By combining some API, you may be able to get exactly what you're after. You would first use the x and y coordinates from the 2D landmarks in Vision's hand pose observations.

Body Tracking with ARKit 3 and Unity3D (TUTORIAL) YouTube

Unity AR Foundation and CoreML: Hand detection and tracking Jiadong Chen · Follow Published in The Programmer In Kiwiland · 5 min read · Jul 23, 2019 -- 2 0x00 Description The AR Foundation.

Image tracking with ARKit tutorial part 2 YouTube

Track the Position and Orientation of a Face When face tracking is active, ARKit automatically adds ARFaceAnchor objects to the running AR session, containing information about the user's face, including its position and orientation. (ARKit detects and provides information about only face at a time.

Image tracking with ARKit tutorial part 1 YouTube

2022 UPDATED TUTORIAL: https://youtu.be/nBZ-dglGow0*** Access Source Code on Patreon: https://www.patreon.com/posts/60601004 ***In this video, I show you ste.

Real Finger Tracking in VRChat Beta (How to Guide) Quest 2 YouTube

Leverage a Full Space to create a fun game using ARKit. struct HandAnchor A hand's position in a person's surroundings. struct HandSkeleton A collection of joints in a hand. A source of live data about the position of a person's hands and hand joints.

How to Detect and Track the User’s Face Using ARKit LaptrinhX

ARKit is Apple's framework and software development kit that developers use to create augmented reality games and tools. ARKit was announced to developers during WWDC 2017, and demos showed.

IPad Pro 2020 LiDAR ARKit 3 AI body tracking / pose estimation in Binary Video Analysis. YouTube

Provide a light temperature of around ~6500 Kelvin (D65)--similar with daylight. Avoid warm or any other tinted light sources. Set the object in front of a matte, middle gray background. Scan real-world objects with an iOS app The programming steps to scan and define a reference object that ARKit can use for detection are simple.

ManoMotion Introduces Apple ARKit Hand Gesture Support VRScout

1 Answer Sorted by: 2 It's possible that you can get pretty close positions for the fingers of a tracked body using ARKit 3's human body tracking feature (see Apple's Capturing Body Motion in 3D sample code), but you use ARBodyTrackingConfiguration for the human body tracking feature, and face tracking is not supported under that configuration.

ManoMotion Brings Hand Gesture Input to Apple's ARKit Road to VR

The new ARKit 3D hand tracking looks amazing, but most of the demos seem to be done with the Vision Pro which has far more sensors than other iOS devices. Will the ARKit 3D hand tracking also be available on iOS Devices with LiDAR?

6 VR Design Principles for Hand Tracking Ultraleap

Code Detect Body and Hand Pose with Vision Explore how the Vision framework can help your app detect body and hand poses in photos and video.

Body Tracking Example Using RealityKit, ARKit + SwiftUI // Coding on iPad Pro YouTube

Understanding Hand Tracking & Body Pose Detection with Vision. The Vision framework allows to identify the pose of people's body or hands. It works by locating a set of points that match with the joints of the human body, from a given visual data. As you can see in the figure on the right, Vision detects up to 19 body points.